Self-RAG and Corrective RAG — The Agent Evaluates Its Own Retrieval

Implement Self-RAG reflection tokens and CRAG quality-based fallback. Build retry/fallback logic with LangGraph conditional edges.

Self-RAG and Corrective RAG — How Agents Evaluate Their Own Retrieval

In Part 1, we solved "where to search" with Query Routing. But what if the retrieved documents are useless? The biggest problem with Naive RAG is that it never evaluates retrieval quality. It throws search results directly to the LLM and hopes the LLM will somehow produce a good answer. In this post, we cover two key patterns where the Agent evaluates its own retrieval results and switches to a different strategy when quality is low.

Series: Part 1: Query Routing | Part 2 (this post) | Part 3: Production Pipeline

The Problem After Routing

Let's say Query Routing worked perfectly and selected the right data source. Even so, three failure modes remain.

Related Posts

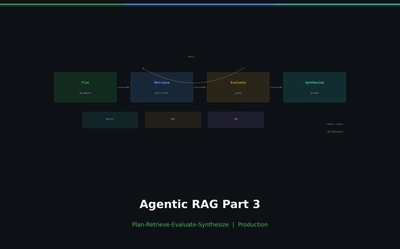

Agentic RAG Pipeline — Multi-step Retrieval in Production

Build a full Plan-Retrieve-Evaluate-Synthesize pipeline. Unify vector search, web search, and SQL as agent tools. Add hallucination detection and source grounding.

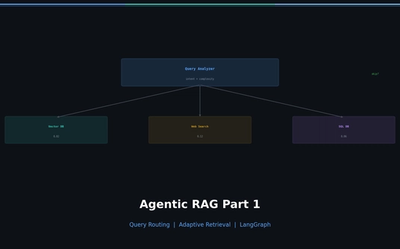

Why Agentic RAG? — Query Routing and Adaptive Retrieval

Diagnose naive RAG limitations, classify query intent, and route to the optimal retrieval source with LangGraph. Implement adaptive retrieval that skips unnecessary searches.

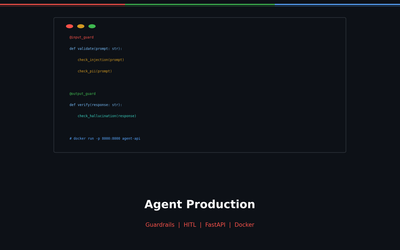

Agent Production — From Guardrails to Docker Deployment

Build safe agents with 3-layer Guardrails (Input/Output/Semantic), deploy with FastAPI + Docker. Includes HITL, rate limiting, and production monitoring checklist.