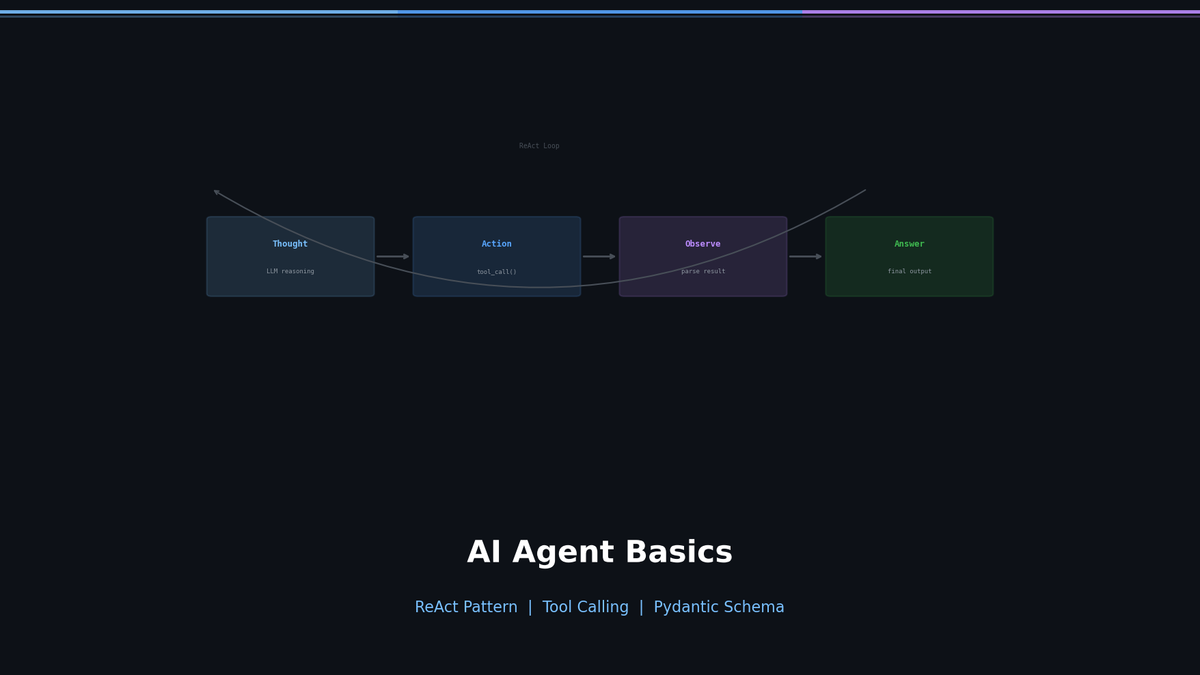

Getting Started with AI Agents — Building a ReAct Agent from Scratch

Give LLMs tools and let them think and act on their own with the ReAct pattern. Build an agent from scratch in pure Python, then evolve it with structured Tool Calling.

Getting Started with AI Agents — Making LLMs Act with the ReAct Pattern

Ask ChatGPT "What's the weather in Seoul right now?" and you get: "I don't have access to real-time information." But an Agent would call a weather API, interpret the result, and answer in natural language. This difference is what separates chatbots from agents.

In this post, we will understand the ReAct pattern — the most fundamental building block of agents — implement it from scratch in pure Python, and then explore why it evolved into Tool Calling.

Series: Part 1 (this post) | Part 2: LangGraph + Reflection | Part 3: MCP + Multi-Agent | Part 4: Production Deployment

Chatbot vs Agent: What's the Difference?

Related Posts

Agentic RAG Pipeline — Multi-step Retrieval in Production

Build a full Plan-Retrieve-Evaluate-Synthesize pipeline. Unify vector search, web search, and SQL as agent tools. Add hallucination detection and source grounding.

Self-RAG and Corrective RAG — The Agent Evaluates Its Own Retrieval

Implement Self-RAG reflection tokens and CRAG quality-based fallback. Build retry/fallback logic with LangGraph conditional edges.

Why Agentic RAG? — Query Routing and Adaptive Retrieval

Diagnose naive RAG limitations, classify query intent, and route to the optimal retrieval source with LangGraph. Implement adaptive retrieval that skips unnecessary searches.