From Evaluation to Deployment — The Complete Fine-tuning Guide

Evaluate with Perplexity, KoBEST, ROUGE-L. Merge adapters with merge_and_unload(), convert to GGUF, deploy via vLLM/Ollama. Overfitting prevention, data quality, hyperparameter guide.

From Evaluation to Deployment — The Complete Fine-tuning Guide

In Part 1 we covered LoRA fundamentals and ran our first fine-tuning. In Part 2 we tackled QLoRA and Korean dataset construction. Training is done. Now two questions remain:

Series: Part 1: LoRA Theory | Part 2: QLoRA + Korean | Part 3 (this post)

- Did the model actually improve? (Evaluation)

- How do we serve it to users? (Deployment)

Related Posts

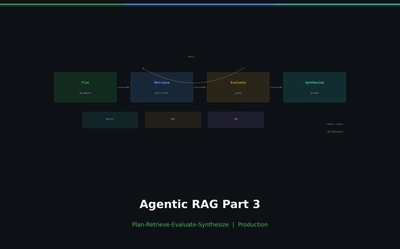

Agentic RAG Pipeline — Multi-step Retrieval in Production

Build a full Plan-Retrieve-Evaluate-Synthesize pipeline. Unify vector search, web search, and SQL as agent tools. Add hallucination detection and source grounding.

Self-RAG and Corrective RAG — The Agent Evaluates Its Own Retrieval

Implement Self-RAG reflection tokens and CRAG quality-based fallback. Build retry/fallback logic with LangGraph conditional edges.

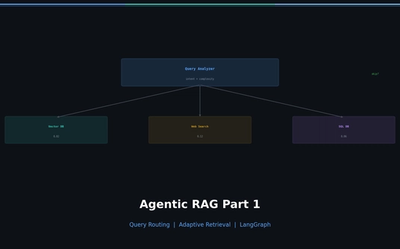

Why Agentic RAG? — Query Routing and Adaptive Retrieval

Diagnose naive RAG limitations, classify query intent, and route to the optimal retrieval source with LangGraph. Implement adaptive retrieval that skips unnecessary searches.